If you build with AI tooling today, you probably move fast.

You install wrappers, SDKs, gateways, agent frameworks, and helper libraries without thinking too much about it. That is normal. It is how modern teams ship.

But the recent LiteLLM incident is a reminder that AI infrastructure now carries the same supply chain risk as the rest of software.

What happens when a dependency sitting in the middle of your LLM stack becomes the attack path?

That is what made this incident worth paying attention to.

What happened

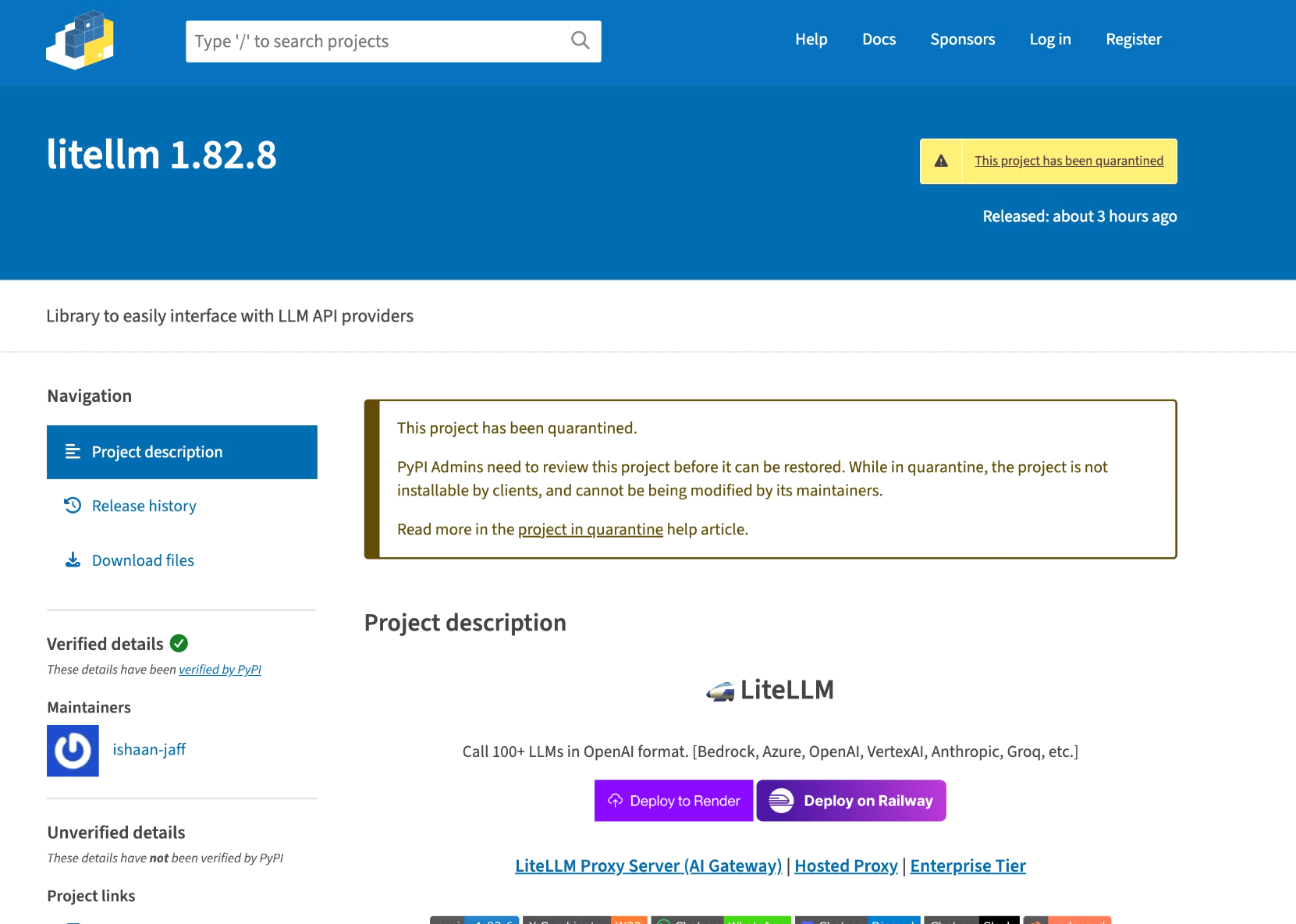

Multiple community reports flagged that LiteLLM versions 1.82.7 and 1.82.8 on PyPI were compromised and should not be installed. A Hacker News post described suspicious behavior immediately after install, including severe resource usage and a payload added into proxy_server.py that decoded and executed another file. A commenter also warned that in 1.82.8, even importing the package could trigger the malicious behavior.

The same warning quickly showed up in the community around AI infrastructure. A Reddit thread in r/LocalLLaMA explicitly told users not to update to 1.82.7 or 1.82.8, and pointed to an incident write-up describing it as a supply chain attack.

That matters because LiteLLM is often used as a central piece of LLM infrastructure across applications, gateways, agent systems, and internal AI platforms.

The release contains a malicious .pth file (litellm_init.pth) that executes automatically on every Python process startup when LiteLLM is installed in the environment. No corresponding tag or release exists on the LiteLLM GitHub repository — the package appears to have been uploaded directly to PyPI, bypassing the normal release process.

Kudos to PyPI for taking quick action on this. The affected versions were quarantined promptly, preventing further installs while the investigation continued.

Why this matters

AI tooling often gets trusted faster than traditional infrastructure.

A team might pin a database driver carefully, but casually upgrade an LLM wrapper because it feels like application code. In reality, tools like LiteLLM can sit close to the most sensitive parts of an AI stack:

- Provider API keys

- Internal prompts and context

- Model routing rules

- Usage and billing controls

- MCP and tool integrations

- Internal gateways

- Developer laptops

- CI runners

That is what makes incidents like this dangerous. The compromise is not only about one bad package version. It is about the trust that package inherits.

What teams should do right now

If your team uses LiteLLM, the safest move is to investigate immediately.

Start with the basics:

- Do not install or use

1.82.7or1.82.8 - Check lockfiles, build logs, and recent installs for those versions

- Audit CI jobs, containers, and developer machines that may have pulled them

- Rotate any model provider API keys or secrets that may have been accessible

- Review unusual outbound activity or unexpected process execution after install or import

The key point is simple: if those versions touched an environment with secrets, assume those secrets may be exposed until proven otherwise.

The bigger lesson

This is a reminder that AI infrastructure has become part of the software supply chain, and it needs to be treated with the same discipline as any other high-trust component.

Many teams still think about AI libraries as "just developer tooling" or "just a wrapper."

But a package that can proxy requests to multiple model providers, sit in the request path for agent systems, manage keys and routing, and run in production or CI should be treated as a privileged part of the stack.

That is why teams should be stricter about:

- Pinning exact versions in requirements and lockfiles

- Using private registries or vetted mirrors where possible

- Isolating AI gateways from broad infrastructure credentials

- Limiting which secrets are available during install and runtime

- Reviewing AI infrastructure packages as privileged software

Final thought

The LiteLLM incident is a good reminder to be more careful about how much trust we hand to fast-moving AI infrastructure.

When something like this happens, the first real question becomes: what is the blast radius?

Which repos pulled it? Which containers baked it in? Which services imported it? Which API keys were present? Which agent workflows or gateways depended on it?

That is where visibility matters.

In Prismor, this is the kind of question teams should be able to answer quickly through the inventory view: where a package exists, which services or repos depend on it, what environments it reached, and how far exposure can spread once trust breaks.

At Prismor, we think this is where developer security is heading next: understanding what ran, where it ran, and how quickly you can map the blast radius when a trusted dependency turns malicious.